Category:

Posted on March 8, 2026

Students are already using ChatGPT for OSCE practice. You can find threads on r/medicalschooluk where people share prompt setups, voice mode tips, and patient briefing note workarounds. This isn't a trend being pushed by medical educators. It's students figuring things out themselves. This post is an honest breakdown of what ChatGPT can and can't do for OSCE prep, so you can decide whether it's worth your time.

The most common setup goes like this: a student copies a patient briefing note or actor script (from Quesmed, their university resources, or a shared drive), pastes it into ChatGPT, and asks it to role-play as the patient. They then switch to voice mode and run through the station out loud.

Some students get more elaborate. They'll give ChatGPT a full patient persona (age, presenting complaint, social history, emotional state) and ask it to stay in character for the duration. Others use it more loosely, treating it as an on-demand history-taking partner when no one else is available at midnight before a clinical placement day.

The appeal is obvious. It requires no coordination, no scheduling, no asking someone to give up their evening. You open the app and go.

For what it is, ChatGPT handles the basics reasonably well.

It's available at any time, costs nothing beyond a standard account, and has no scheduling friction whatsoever. For a student who needs to run through a respiratory history at 11pm, that accessibility is genuinely useful.

The role-play quality on straightforward history-taking stations is decent. ChatGPT can simulate patient emotions reasonably well: anxiety, reluctance to disclose, embarrassment around symptoms, if you prompt it to. It won't just fire answers at you if you set up the persona properly. For practising open questions, active listening cues, and ICE, it gives you something real to work with.

It also scales across specialties without any content gaps. Whether you need a psychiatric history, a paediatric scenario, or a breaking bad news station, ChatGPT can accommodate it if you provide the context.

This is where honest assessment matters, because the limitations aren't minor inconveniences. Some of them fundamentally undermine what OSCE practice is supposed to achieve.

Timing is broken. OSCE stations run to 7 or 8 minutes. ChatGPT does not know this and does not care. Patient responses tend to be longer than a real SP would give, which throws your pacing off in ways that are hard to detect in the moment. If you practice consistently with ChatGPT, you may be training yourself to run over time without realising it. There is no alarm, no timekeeper, no warning at 6 minutes.

Language drift. There are documented reports of ChatGPT switching languages mid-consultation, particularly when voice mode encounters ambiguous input. For a student running a history-taking station, having the patient suddenly respond in a different language is more than a minor bug. It breaks immersion completely and wastes the session.

No mark-scheme feedback. This is the biggest gap. After you finish a station with a real SP or a practice partner, you can go through the checklist: did you ask about red flags? Did you cover ICE? Did you close properly? ChatGPT cannot do this. It has no awareness of the mark scheme, no ability to tell you which items you hit and which you missed. It will respond to your history-taking, but it cannot evaluate it. You finish the station with no objective sense of how you performed.

Setup friction. The convenience is partly illusory. To run a proper session, you have to write or paste a detailed patient brief, instruct ChatGPT on how to behave, and often correct it mid-session when it drifts. Students who do this well spend 10 to 15 minutes on setup for a 7-minute station. That overhead adds up, and it repeats every single session because there is no saved scenario format.

Character breaks. When you correct ChatGPT, it tends to step out of character and acknowledge the correction as an AI. A real SP stays in role no matter what you say. This matters more than it seems. Part of what makes practice valuable is the discomfort of not being able to meta-comment your way out of an awkward moment.

If you want to use it, this prompt template will get you a more realistic session than most student setups. Copy and paste it, fill in the details, then switch to voice mode.

You are playing the role of a patient in a UK medical school OSCE station. Stay in character for the entire conversation. Do not break character, offer feedback, or acknowledge being an AI until I explicitly say "end station."

Patient details:

- Name: [e.g. Mr James Okafor]

- Age: [e.g. 58]

- Presenting complaint: [e.g. worsening shortness of breath over 3 weeks]

- Key history: [e.g. known COPD, smoker, recently started a new job with dust exposure]

- Emotional state: [e.g. anxious, slightly dismissive of symptoms, doesn't want to worry family]

- Information to withhold initially: [e.g. has been waking up at night, hasn't mentioned to anyone]

- Information to reveal only if asked directly: [e.g. chest pain on exertion, ankle swelling]

Keep your responses to 1-3 sentences. Do not volunteer information unprompted. Respond naturally as the patient would, not as a medical textbook.

When I say "end station," come out of character and tell me which key history points I asked about and which I missed, based on what you know.

The final instruction asking for a debrief is the closest you can get to mark-scheme feedback with this tool. It is not the same as a structured checklist, but it is better than nothing.

Use it when you have 20 minutes, it is late, and you need zero-friction practice. A quick cardiovascular history run-through at 2am with no setup beyond pasting a brief is genuinely better than not practicing at all. For getting repetitions in on history structure and open questioning, it earns its place.

Do not rely on it if you need timed practice. Do not use it as your primary feedback mechanism. And do not use it in the final week before your OSCE, when accurate, time-pressured simulation matters most. The gaps that are manageable in low-stakes early prep become significant when you are trying to calibrate real exam performance.

For mark-scheme feedback and structured self-assessment, OSCEstop is worth bookmarking. The checklists are detailed and free to use, and running through them out loud after a ChatGPT session partially fills the feedback gap.

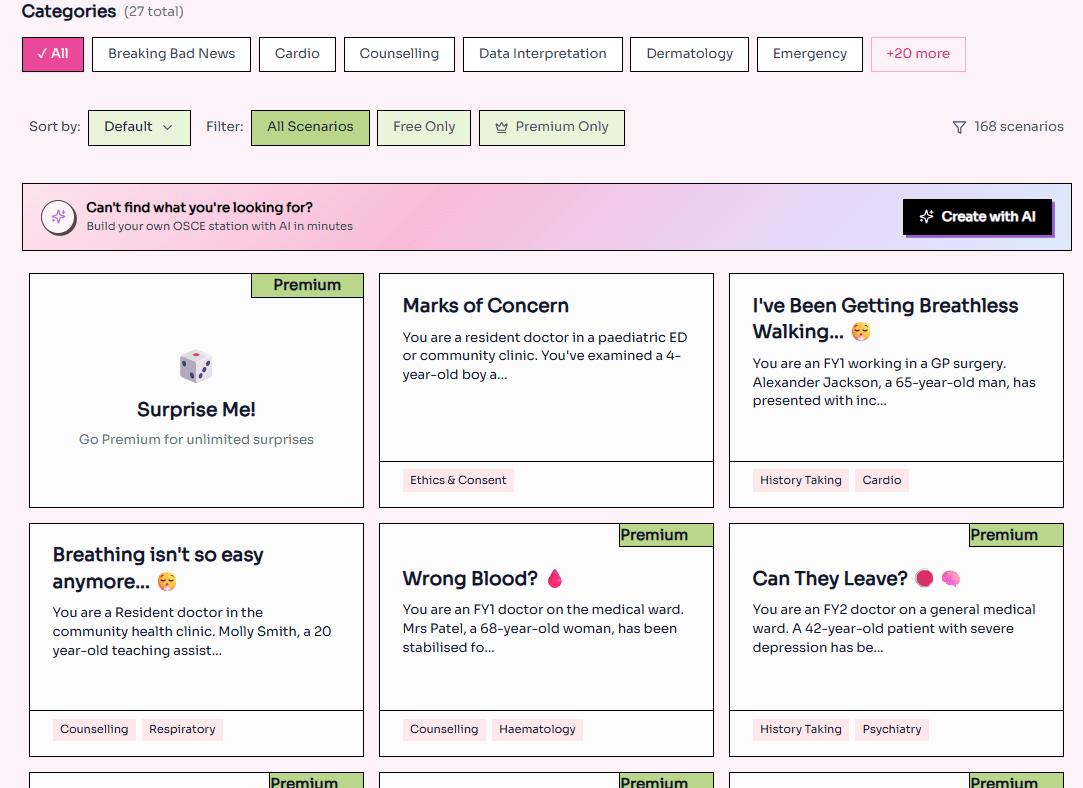

For timed, voice-based AI practice built specifically for OSCE preparation, MLAbuddy is designed for exactly this. The stations are time-limited, the AI patients are built around UKMLA CPSA content, and the feedback is structured around what you actually need to hit. It is not a workaround. It is purpose-built for what ChatGPT is being asked to approximate.

For high-stakes preparation, there is still no substitute for peer practice over video call. The unpredictability of a real person, the social pressure of being observed, and the ability to give and receive honest feedback are things no AI tool currently replicates. Use ChatGPT and dedicated platforms to build fluency. Use real people to test it.

ChatGPT is a decent stopgap. It is worth knowing how to use it. Just go in with accurate expectations of what it can and cannot do.